The most repeated piece of AI advice in the market right now is: start with a use case. Before deciding which tools to deploy, agree the baseline outcomes you want to improve and the metrics that will prove it. That step happens last in most organisations. It should happen first.

It is how many AI programmes still get framed: find a visible process that is slow or manual, prove the tool works, then try to scale. It sounds like progress. For leaders trying to prove AI ROI for business, it often is not.

It is one of the reasons many boards still struggle to get a straight answer on AI ROI.

At Codestone, AI ROI is not treated as a reporting exercise after deployment. It is designed into the roadmap before technology decisions are made. We built our AI Endgame series because we kept having the same conversation: a business had deployed AI tools, the tools were working as advertised, but nobody could connect that back to a business outcome the board cared about. The use case had been solved. The problem it was supposed to address had not been defined clearly enough to measure.

Starting with a use case is still starting with the technology. The technology is only useful if it improves a decision the business already cares about.

What use case thinking actually does

When you start with a use case, you anchor the whole programme to a capability. Can AI summarise emails faster? Yes. Can it flag invoice anomalies? Yes. Can it generate first drafts of reports? Yes.

These are all true. None of them are a business case.

A business case requires a before-and-after state for something the business cares about. Revenue. Margin. Close time. Decision speed. Risk exposure. Use cases sit upstream of those metrics and require a chain of reasoning to connect them. That chain is where AI ROI arguments collapse under board scrutiny.

The finance director may welcome faster invoice processing, but the board-level question is whether it reduces cost, improves cash visibility, or lowers the risk of errors reaching the ledger. Those are the metrics that matter. A use case does not automatically produce them.

What the data shows

This is not an isolated problem. BCG research from October 2024 found that 74% of companies had yet to show tangible value from AI, based on 1,000 senior executives across 59 countries. NTT DATA has highlighted that between 70 and 85% of GenAI deployment efforts are failing to meet expected outcomes, and their Global GenAI Report also found that 51% of organisations have not aligned their GenAI strategy with their business plans at all.

McKinsey’s State of AI report (March 2025) adds a further dimension: fewer than one in five organisations are tracking KPIs for their gen AI programmes. The programmes exist. The measurement discipline does not.

The common thread is not that AI does not work. It is that most organisations deployed tools before they agreed on what success looked like.

What we see go wrong

The failure patterns are consistent enough to be worth naming.

The AI did exactly what it was asked to do with the data it was given. The data was not fit for purpose. The programme stalled. The Board draws the wrong conclusion about AI.

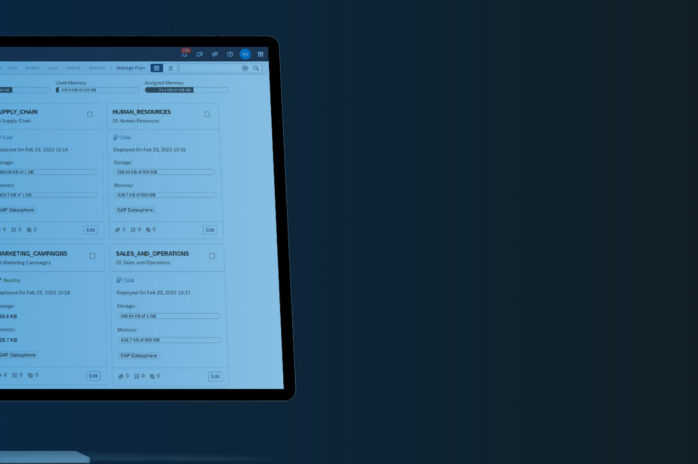

Data foundation is not a technical detail. It is the single biggest determinant of whether AI delivers or disappoints. Codestone’s AI Endgame Session 2 is dedicated entirely to this: why data readiness is the prerequisite most organisations skip and what getting it right actually involves. For businesses using Microsoft tools, the bonus session with Mike covers how Copilot, Fabric, and Power BI work together to build the connected data layer that makes AI outputs reliable.

A second pattern: the AI initiative runs for a year and someone is asked to prove ROI. They look for metrics that support a positive story. No baseline was agreed before deployment, so the before-and-after comparison is contested. The ROI case becomes a negotiation rather than a measurement.

A third: a use case gets selected because it is technically straightforward but crosses a departmental boundary. When the tool delivers, nobody is accountable for converting the efficiency gain into a business outcome. The saving sits in a process rather than a budget line. It never reaches the Board.

Start with the decision

The alternative is to start with the decision that needs to improve, not the tool that might help.

Every material business outcome is the product of a decision. Revenue tracks back to pricing calls, pipeline prioritisation, and customer retention. Margin lives inside procurement and operational cost. Risk exposure comes down to supplier dependency, compliance gaps, and what the finance function can actually see.

Ask which decisions in this business are currently being made with incomplete or delayed information. The CFO closing the month twelve days in because consolidation is done manually. The operations director raising emergency purchase orders because demand planning is based on last year’s actuals. The MD who cannot see group-wide performance without waiting for the finance team to compile it from three different systems.

Simon Fenech, Head of Business Development at Codestone, put the principle clearly during the AI Endgame series:

“You always have to start with your outcome. You’ll then know what metrics you’re going to measure, and then you will know how to measure them. It sounds really simple, but a lot of organisations do miss that because of the velocity you have to go at.” Simon Fenech, Codestone AI Endgame

That sequencing, outcome first, metric second, tool third, is what separates programmes that can answer the board’s question from those that cannot.

How this changes what you build

Decision-first thinking changes the shape of an AI programme in three practical ways.

It forces a baseline before deployment. If the decision is to close the month faster, current close time is measured before anything is deployed. The target is agreed. The metric is unambiguous. Twelve months later, there is nothing to argue about.

It surfaces data quality issues early. If the decision requires reliable consolidated financial data and that data currently lives in three systems with known reconciliation gaps, that problem gets addressed before the AI is configured around broken inputs.

It creates ownership. When an AI initiative is anchored to a specific decision owned by a specific person, accountability follows. The FD who owns the close-time decision is motivated to see the tool succeed. The programme has a sponsor with skin in the game, someone accountable for the outcome rather than just the spend.

Where SAP and Codestone fit

For organisations running SAP Business One, SAP Business ByDesign, or SAP S/4HANA, the decision-first approach has a specific implication.

Across the SAP ecosystem, AI and machine learning capabilities increasingly sit close to the workflows where business decisions are made, from embedded analytics and predictive planning to automation and anomaly detection. The real work is making sure the right data, workflows, and decision points are in place before those capabilities are applied.

This is where Codestone’s role is most direct. Before any AI capability is activated, the questions we ask are: which decision is this meant to improve, is the data it relies on in good enough shape, and what does the before-and-after metric look like? Those three questions, in that order, are what separate an AI programme that produces board-level ROI from one that produces a list of use cases nobody can value.

The organisations getting the most from AI tend to be the most disciplined about defining what they need it to improve before they build anything. Technological ambition rarely determines outcomes.

What this means for the board conversation

The next time AI ROI comes up in a board meeting, the answer needs to be a set of business outcomes with before-and-after metrics attached. A list of tools deployed or hours saved will not hold the room.

The Board has read the same reports as everyone else. The question is whether this organisation is getting return from its investment, specifically and measurably, in areas that matter to the business.

That answer requires outcomes to have been defined before the tools were turned on. For most organisations, it is not too late to reframe the programme. But the longer it runs on tool metrics rather than outcome metrics, the harder that board conversation becomes.

Codestone built the AI Endgame series to give SMB and mid-market businesses the same quality of AI strategic thinking that larger organisations pay significant consulting fees for. All four sessions are available on demand. Or get in touch directly to talk through what a decision-first AI roadmap looks like for your business.

Frequently asked questions

Why do most AI programmes fail to prove ROI?

Because value is not defined before deployment begins. BCG found 74% of companies have yet to show tangible value from AI. The most common cause is that programmes are built around use cases that prove a tool works, not decisions that connect to metrics the board tracks.

What is the difference between a use case and a decision?

A use case describes what AI can do. A decision describes what the business needs to do better. The use case is a means. The decision is the point. Starting with the decision keeps the programme anchored to outcomes the business already cares about and can measure.

How do you measure AI ROI before deployment?

Define the decision you want to improve, identify the metric that reflects it, and establish a baseline before any AI tool is switched on. Agree on a target and a review date. This makes the ROI case a measurement twelve months later, not a reconstruction.

Why does data quality affect AI ROI so often?

AI draws conclusions from the data it is given. If that data has gaps or reconciliation errors, the outputs will be unreliable regardless of the tool. Most AI programme failures we encounter trace back to data quality issues not identified before the project started.

What is the first step for a business building an AI ROI case?

Identify one decision that is currently being made with incomplete or delayed information. Map what changes commercially if that decision improves. Agree on the metric and the baseline before deploying anything. That is sufficient to build a credible Board-level business case for a first initiative.